We look forward to showing you Velaris, but first we'd like to know a little bit about you.

How AI Is Impacting Customer Success in 2026 (Based on Real Data and Statistics)

A data-backed look at how AI is changing Customer Success, from adoption and trust to role evolution and what sets top teams apart.

The Velaris Team

April 21, 2026

If you work in Customer Success, you’ve probably seen people in the industry bragging about how quickly AI has become part of their day-to-day and how it supercharges their efficiency. On the other end, you’ll hear doomsday warnings about AI making your role obsolete. Both narratives are compelling, but who’s actually telling the truth?

This article is based on a report we did, surveying 394 Customer Success professionals across roles, company sizes, and regions to take a closer look at what’s actually happening in CS. It covers how teams are using AI today, how the role is evolving, and where the biggest gaps still exist.

We found that the state of AI in CS is more nuanced than the extreme takes you’ll find online suggest. AI is pretty much everywhere, but it’s not yet deeply embedded into systems. Job satisfaction after AI can vary, depending on multiple factors. People’s roles are changing, not disappearing outright (though there’s hints that this might not always be the case).

Some patterns are encouraging, others might raise eyebrows. Together, they’ll give you one of the clearest pictures that you can get of where Customer Success is heading next.

Key takeaways

- Nearly every CS team is using AI. The advantage now comes from how well it’s integrated into workflows

- The majority of AI usage is still centered around prompting and productivity.

- Execution is getting faster, but the role is becoming more focused on judgment, prioritization, and customer relationships.

- Teams using more advanced AI report higher satisfaction and lower frustration.

- Many teams lack clear governance for AI, which creates risk as AI moves closer to customer-facing decisions.

- Strong teams are using AI to reduce low-value work and reinvest time into higher-impact activities.

How AI is actually being used by CS teams

Almost every CS team is using AI

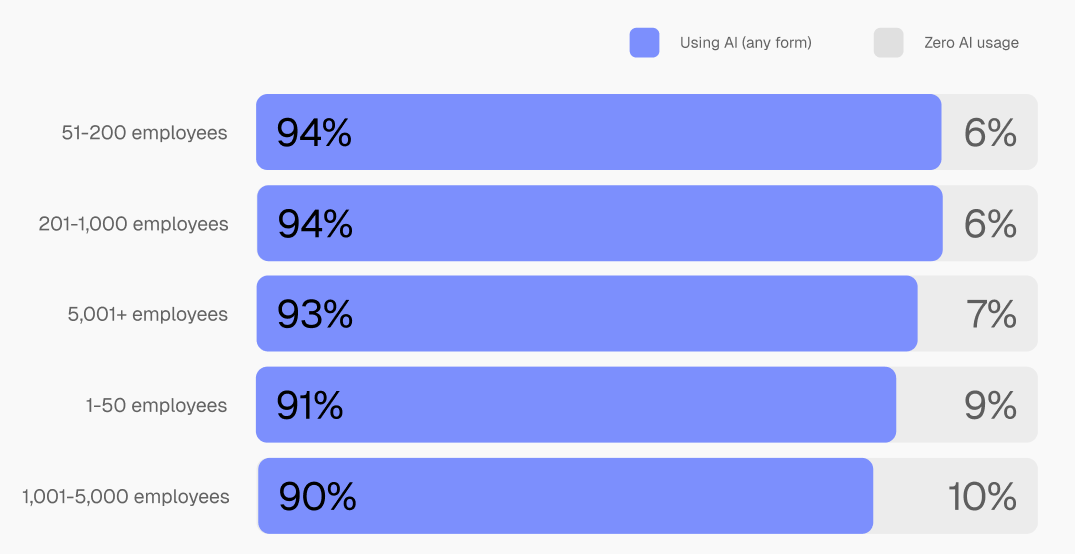

AI adoption seems more or less universal already. 94.8% of respondents report using AI in some form, with only a small minority, 5.2%, not using it at all. What’s more interesting is how consistent this is across the board. Whether it’s a small team or a large enterprise, adoption rates sit within a very narrow range.

That level of consistency tells us that AI usage can’t be considered a competitive differentiator on its own. It’s now more of a bare minimum requirement. Most teams have access to the same tools, and are starting from a similar baseline. This means the real differentiator will have to come from humans being smart in using AI.

Most teams are still at the earliest stage

It’s easy to look at near-universal adoption and assume the industry is further along than it actually is. Depth of usage is a whole different story.

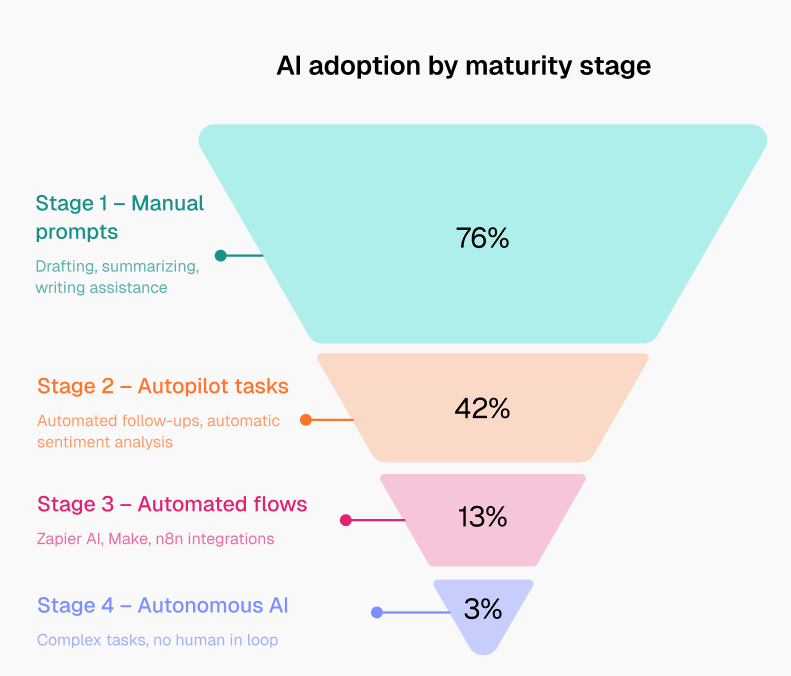

Around 76% of CS professionals are still using AI primarily for prompting tasks like drafting emails, summarizing calls, or pulling together notes.

These are high-frequency, time-consuming tasks, so the impact is immediate. A task that used to take 20 minutes now takes five. It’s one of the main reasons adoption has spread so quickly. The value is easy to see, and the barrier to entry is low.

But this layer of usage is still tied to individual actions. Someone has to prompt, review, and decide what to do next. These are no doubt useful capabilities, but barely scratch the surface of what AI can do.

Only a smaller portion of teams have moved into more structured use. Roughly 13% are using AI within workflow automation, and just 3% report using it in more autonomous ways. In other words, most teams are still interacting with AI one task at a time.

What we can infer is that AI is helping people move faster, but it has not yet consistently changed how work is organized or delivered at a fundamental level.

Remember that differentiator we talked about? If a Customer Success team can turn isolated AI uses into systems that run consistently in the background, they’re very likely to take the lead.

Strategic use cases are emerging but not dominant

There are clear signs that teams are starting to push beyond basic productivity use cases. Some are using AI to analyze customer feedback, identify trends across accounts, or support decisions around churn risk and expansion opportunities.

These use cases move closer to what CS teams actually care about. AI has incredible potential to contribute to an understanding of customers and markets. It can help surface patterns that would be difficult to spot manually, especially across large portfolios.

That said, these applications are still less common. It requires a level of operational maturity that many CS teams are still building toward. Processes need to be clearly defined. Data needs to be connected. There has to be enough consistency in how work is done for AI to plug into it reliably. Without that foundation, teams tend to fall back on safer, more controlled use cases where the cost of being wrong is lower.

How AI is changing customer success

Job loss is limited, but so is the reporting of it

Concerns around AI replacing Customer Success roles haven’t materialized in a widespread way. 83% of respondents report no reductions in CS headcount due to AI. Most teams still seem intact.

Nevertheless, there is a noticeable variability in how job loss is perceived across seniority levels. Leaders are significantly more likely to report confirmed reductions, while individual contributors are far more likely to say they’re unsure. That suggests decisions are happening at a level that isn’t always visible to the people closest to the ground work.

So while the majority of teams aren’t seeing direct impact on headcount, there’s still a sense that things are shifting in ways not everyone fully sees yet.

The role is becoming more strategic

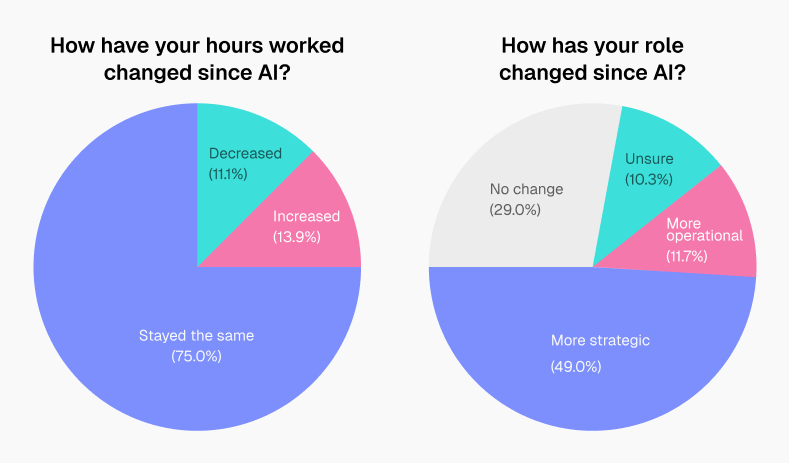

Where the impact is clearer is in how the role itself is evolving. 49% of CS professionals say their role has become more strategic since adopting AI. At the same time, most report that their working hours haven’t changed significantly.

The combination of those stats implies that AI isn’t exactly reducing the amount of work. It’s more that the type of work itself is changing.

This makes sense, since AI can handle tasks that are repetitive or time-intensive more quickly, which creates space. What fills that space depends on the team, but in many cases it’s shifting toward planning, prioritization, and deeper engagement with accounts.

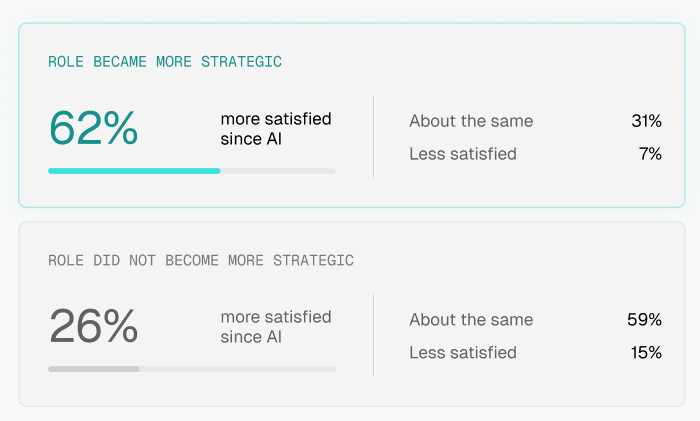

Strategic work leads to higher satisfaction

This shift has a direct impact on how people feel about their roles. Those who report moving into more strategic work are 2.4 times more likely to be satisfied. The same is true for teams using AI in more structured ways, particularly those that have moved beyond one-off prompts into some level of automation.

On the other hand, when AI is used in a way that adds overhead, more outputs to review, more systems to manage, or more noise to filter through, it can have the opposite effect on satisfaction. The presence of AI alone doesn’t improve the experience; how it’s used really matters.

A meaningful variation in satisfaction like that points to something beyond efficiency. CS professionals clearly enjoy relationship building, decision making, and ownership related work more than menial tasks. The value of AI is very clear in this regard, letting humans fulfil their ideal role by taking the routine burden off them.

But it’s worth remembering that when execution becomes easier, expectations around decision-making increase. Knowing what to do with the information AI provides becomes more important. So if CS teams want to maintain their increase in job satisfaction, they might want to lean in more heavily to the strategic side of their roles and produce valuable business outcomes

Human interaction isn’t going away

Despite the rise of AI, customer interaction remains largely unchanged. Only a small percentage of respondents, around 4%, report spending less time engaging with customers.

In many cases, interaction levels are either stable or increasing. That aligns with how CS operates in practice. Relationships, trust, and context still sit at the core of the role. AI can support those interactions, but CS teams are very reluctant to hand them over entirely.

Is it fair to trust AI?

There’s a clear confidence–reliability gap

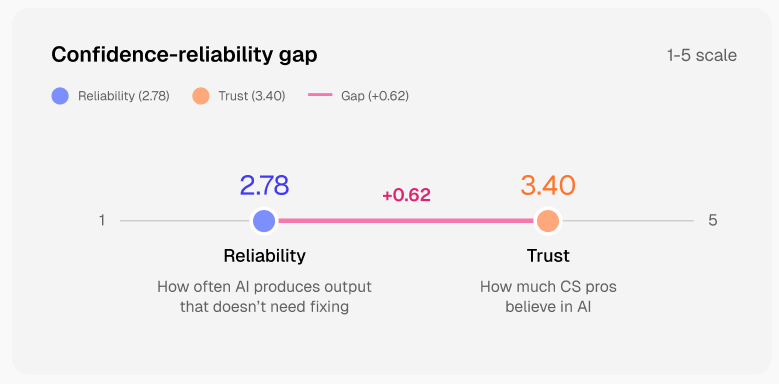

CS teams are getting more comfortable using AI, but that confidence isn’t fully matched by how reliable the outputs are. On average, trust in AI sits at 3.40 out of 5, while reliability is lower at 2.78.

That gap materializes in how people use it. AI is often the first step in getting something done, but rarely the final one. Maybe that might change as AI gets more advanced, but for now, teams don’t feel comfortable relying on it without a second look.

Most outputs still need human correction

For most teams, review is part of the process. 82% of CS professionals report regularly fixing or refining AI outputs before using them.

This isn’t necessarily a failure of the tools. It could just be a reflection of how they’re being used. AI can get you most of the way there, but the last stretch still depends on context, nuance, and judgment that only you can provide.

So AI is speeding things up, but not so much that it eliminates the need for some tedious work of carefully reviewing outputs.

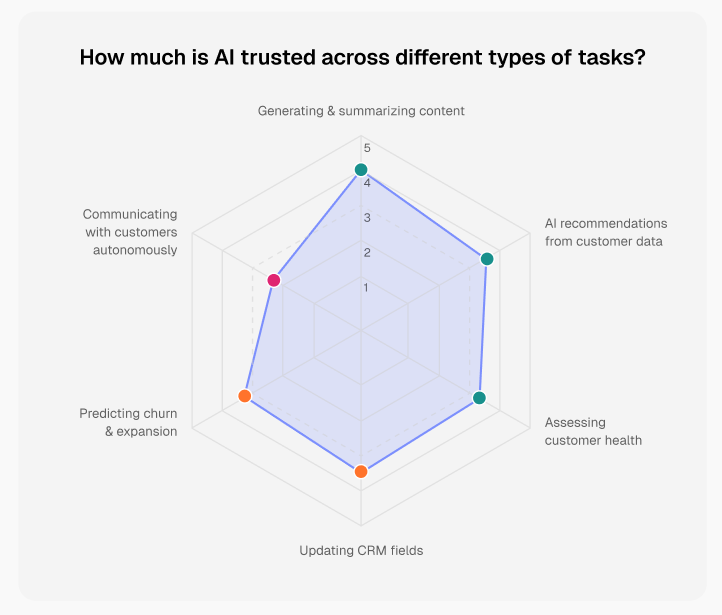

AI is trusted for content, not decisions

Not all use cases are treated the same. AI is most trusted when it comes to generating and summarizing content. Drafting emails, pulling together notes, or organizing information are flagged as relatively low-risk tasks.

But whenever AI moves closer to decision-making, trust plummets. Areas like assessing customer health, predicting churn, or handling customer communication autonomously carry more weight. The cost of getting those wrong is higher, so teams are more cautious.

This difference in trust is a good indicator of where AI can be allowed to operate independently, and where it needs to stay under tighter human control. Prioritizing accounts, handling risk, guiding strategy, and building trust all depend on factors that aren’t always captured in data alone.

Because of that, AI is likely to remain a co-pilot in this space. Less effort required to get to an answer, but retains the need to decide whether that answer is the right one.

How customer success professionals really feel about AI

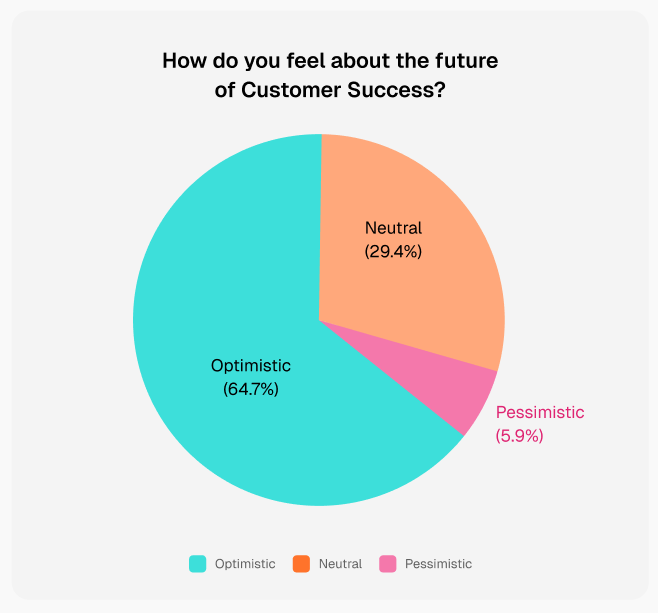

Most CS professionals are optimistic

Despite the uncertainty around AI, the overall sentiment in Customer Success is positive. 64% of respondents say they feel optimistic about the future of the role, while only 6% describe themselves as pessimistic.

That optimism has merit. Sure, most teams are still early in their adoption, and many of the challenges are boldly apparent. But there’s a general sense that AI is opening up new possibilities, even if the path forward isn’t fully clear yet..

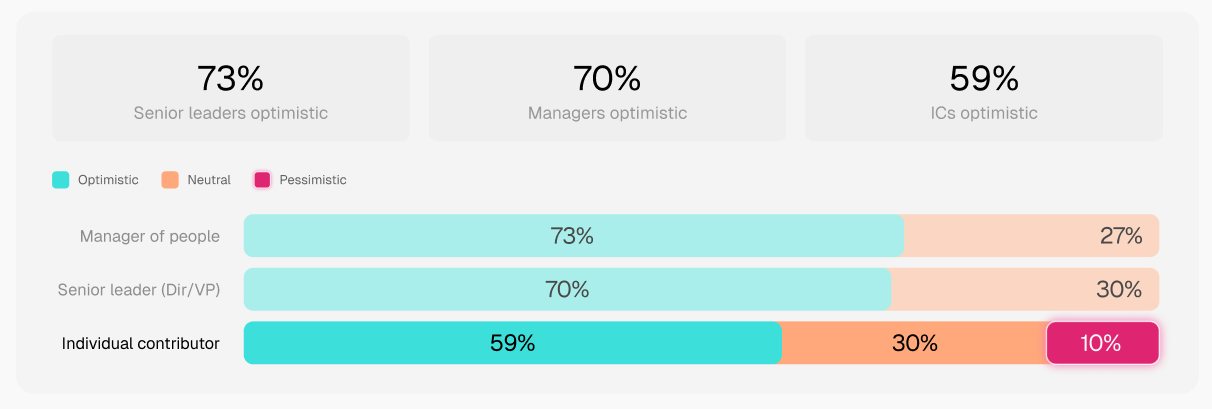

ICs and leaders see AI differently

There’s also a clear difference in how AI is perceived depending on where someone sits in the organization.

73% of senior leaders tend to be more optimistic and report higher levels of trust in AI. Individual contributors are more cautious, with only 59% expressing optimism. They’re the ones interacting with the outputs directly, fixing inaccuracies, and dealing with edge cases that don’t always show up at a higher level.

This is a reminder to be realistic in expectations, since it’s easy from a strategic viewpoint to demand more AI integration into your org’s workflows. Looking at it from the point of view of day-to-day execution might reveal a more genuine appraisal of AI.

AI maturity shapes sentiment significantly

Teams that use more advanced forms of AI usage show a 12-point jump in satisfaction (51% vs 39%) and nearly half the dissatisfaction rate (7% vs 13%).

This demonstrates that when AI is more autonomous, more embedded into workflows, and connected to real data, it tends to reduce friction.

AI should be applied in a way that taps into more of its potential; surface level usage just introduces more outputs to review, more context switching, and less clarity on what actually matters. With none of the big efficiency spikes a mature AI system offers.

The biggest gap: AI governance and accountability

Many teams still lack clear AI rules

While adoption has moved quickly, governance hasn’t exactly kept pace. Admittedly, most organizations have some form of governance. But around a quarter (24.8%) of CS teams report having no clear guidelines for how AI should be used. Others (30.01%) operate with informal or loosely defined rules.

What this highlights is a problem in how AI is being introduced. Tools are being taken on too quickly, often at the individual or team level, while the processes around them are still evolving.

In practice, that means individuals are often left to decide what’s acceptable. What can be automated, what needs review, and what should never be handled by AI varies from person to person.

That flexibility can be advantageous for teams that want to move faster in the short term. But there’s risks and inconsistencies when you lack structured governance.

Decisions can become inconsistent across accounts, sensitive information may be handled differently depending on the individual, and outputs sent to customers may vary in quality or accuracy. Over time, a lack of alignment makes it harder to maintain trust, both internally and with customers.

Major issues haven’t been reported yet, largely because most usage is still limited to lower-risk tasks. But as teams move toward deeper automation and more decision-oriented use cases, the stakes change and the absence of clear rules and accountability cannot be ignored.

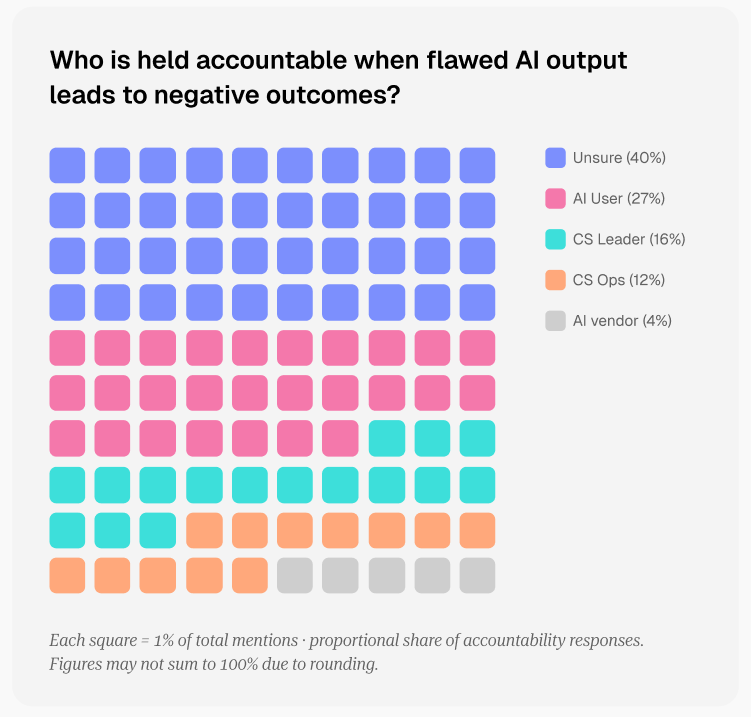

Ownership is unclear when AI fails

The lack of structure becomes more visible when something goes wrong. 40% of respondents say they’re unsure who is accountable when AI outputs lead to negative outcomes.

Even among those who do assign ownership, responsibility is spread across multiple roles, from the CSM using the tool to leadership or operations. A significant minority even point to the AI vendor itself.

Unclear ownership creates gray areas. Teams are using AI to influence customer-facing decisions, but without a clear answer on who ultimately has to own up when mistakes happen.

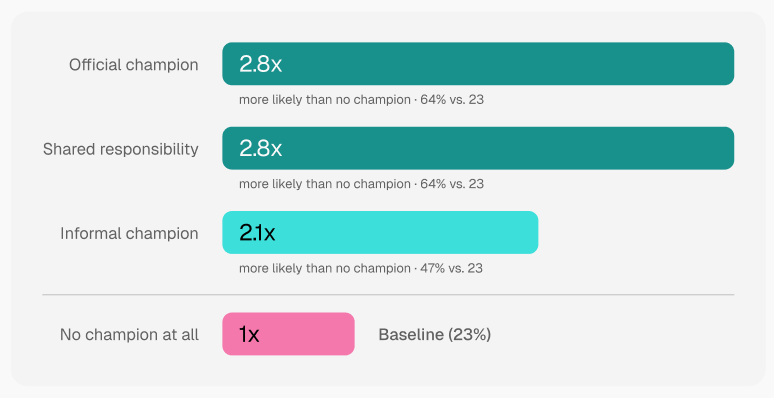

AI champions make a major difference

One of the clearest signals in the data is the impact of ownership. Teams that have a defined AI champion, whether formal or informal, are 2.8 times more likely to report a shift toward more strategic work.

That role doesn’t necessarily need to be complex. What matters is that someone is responsible for guiding how AI is used, helping the team move beyond ad hoc usage, and creating some level of consistency.

What separates high-performing CS teams using AI

They move from prompts to systems

The biggest shift happens when teams stop using AI as a one-off tool and start embedding it into how work runs.

In lower-maturity setups, AI is used reactively. A task comes up, someone writes a prompt, gets an output, and moves on. The process resets every time.

Higher-performing teams take a different approach. They identify repeatable workflows, onboarding, renewals, risk monitoring, follow-ups, and build AI into those processes.

That change reduces variability and removes the need to constantly “start from scratch.” It also makes AI more reliable, because it operates within a defined structure rather than isolated prompts.

So don’t ask AI to help each time. Create a system that runs with AI already integrated into it.

AI accelerates, they use it to elevate

It’s easy to use AI to increase output. More emails, more touchpoints, more updates. But that often comes with more to review and more room for inconsistency.

Stronger teams are more selective. They use AI to reduce effort on lower-value work, then reinvest that time into higher-impact activities. Account planning, deeper customer understanding, and more thoughtful engagement tend to take priority over simply doing more.

Not using AI for every single task may create some slight trade-offs in speed. But it's worth it for better decisions and more meaningful interactions.

They keep humans in the loop intentionally

High-performing teams treat AI outputs as a starting point, while the final output is always overviewed by humans.

Summaries, recommendations, and drafts are reviewed, refined, and shaped before they’re used. This is just part of the process as necessary friction in the system.

By building that review step in intentionally, teams maintain quality while still benefiting from the speed AI provides. It also reinforces where responsibility is owned, with the human making the final call.

They invest in the three skills that don’t get automated

As AI takes on more of the execution layer, skills that it can’t replicate stand out.

- Curiosity drives better questions and a deeper understanding of customers.

- Critical thinking helps challenge outputs, interpret signals, and make decisions in context.

- Relationships remain central to trust, influence, and long-term retention.

These aren’t exactly novelties, but they do become more important as the role evolves. The teams that lean into them tend to get more value from AI, because they know how to direct it, question it, and apply it effectively.

Final takeaways

AI is already changing Customer Success, but the impact is more subtle than most narratives suggest. While fully autonomous systems are still rare, AI is becoming a consistent layer within day-to-day workflows.

What this creates is a transition period. Teams are adjusting to new ways of working, figuring out where AI fits, and learning what to trust. Some are starting to see clear gains, others are still dealing with friction. Most are somewhere in between.

The teams that see the most value are the ones who are building around AI with intent, caution, and careful planning.

Want the full picture?

This article covers the key patterns, but our full report goes way deeper into the data. It breaks down how adoption varies by company size, how sentiment differs across roles, includes perspectives from CS leaders working through these changes in real time, and more.

Download the full report here: The State of AI in Customer Success Report 2026

Frequently Asked Questions

The Velaris Team

A (our) team with years of experience in Customer Success have come together to redefine CS with Velaris. One platform, limitless Success.